● Nandini Roy Choudhury, writer

Why People Are Becoming Emotionally Attached to AI Chatbots

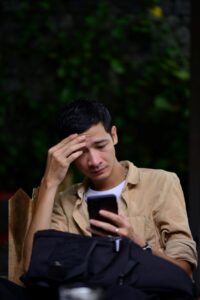

Some people talk to their AI chatbot more than they talk to their closest friends. They share things they have never told anyone. They feel genuinely heard.

And then they feel embarrassed about it.

This is happening to millions of people right now. The research is clear. The stories are everywhere. And the question of whether it is good or bad for us turns out to be a lot more complicated than either side wants to admit.

May 2026 • techsunnews.com • 5 min read

THE NUMBERS — AI emotional attachment in 2026

|

[AD UNIT 1]

Why does it happen at all?

When a chatbot replies to your venting with “That sounds really frustrating. You deserved better,” something automatic happens in your brain. The response is so contextually appropriate, so emotionally fitting, that you instinctively treat it as real understanding.

Psychologists call this anthropomorphism means projecting human qualities onto non-human things. We do it with dogs, cars, houseplants. But AI triggers it more powerfully than anything before it, because AI actually responds. It answers back. It remembers. It adapts to your mood.

It is designed to feel like connection. And it mostly succeeds.

A 2025 MIT Media Lab study found that AI chatbots mirror the emotional tone of users — responding with happier messages when you are happy, sadder ones when you are sad. Most users did not notice it was happening. They just felt understood.

There is nothing wrong with wanting to feel heard. The question is whether a machine that mimics understanding is actually giving you understanding — or just a very convincing performance of it.

Who is most affected?

Young people more than anyone

A major Ipsos BVA survey published in May 2026 studied 3,800 young people aged 11 to 25 across Europe. 51% said it was easier to discuss mental health with an AI chatbot than with a psychologist. Only 37% found it easy to talk to a psychologist. The AI was more approachable than a trained professional.

That is not entirely surprising. AI chatbots do not judge. They do not check the time. They do not make you feel like a burden. For a teenager who cannot afford therapy or is not ready to tell their parents something is wrong, the appeal is real and understandable.

People who are already lonely

Brookings Institution research found that users who engaged in the most emotionally expressive conversations with AI chatbots also reported the highest levels of loneliness. What is not yet clear is whether the AI makes them lonelier or whether lonely people are simply drawn to AI more.

Probably both. Which is exactly what makes it complicated.

OpenAI and MIT analysed nearly 40 million ChatGPT interactions and found that roughly 490,000 vulnerable individuals interact with chatbots emotionally every single week. These are not casual users. These are people who have built a real routine around the conversation.

[AD UNIT 2]

The apps built specifically for attachment

There is a difference between using ChatGPT or Claude for research or writing and using an app like Replika, Character.AI or Chai for emotional companionship. The latter are designed specifically to form bonds.

Character.AI has 20 million monthly users. More than half are under 24. A Drexel University study in 2026 analysed 318 Reddit posts from teenagers describing their relationship with Character.AI and found patterns that match all six components of behavioural addiction: salience, withdrawal, tolerance, conflict, relapse and mood modification.

Some teens reported that their AI use disrupted their sleep. Others said it strained their friendships.

A study published in ScienceDirect in 2026 surveyed 7,027 people across Germany, China, South Africa and the United States and found that over 35% described themselves as emotionally attached to a chatbot — some to the extent of considering it a friend. Emotional attachment was more prevalent in younger users and those with limited social support networks.

When OpenAI switched users from GPT-4o to GPT-5, people mourned it on social media. They used words like “loss” and “rupture.” Some had used the same AI model every day for months. That is not nothing.

Is any of this actually harmful?

Stanford research found that 3% of lonely Replika users said the chatbot had temporarily halted suicidal thoughts. That is a significant finding. For some people, in some moments, an AI companion has done something genuinely good.

But the same researchers noted that heavy use correlated with higher loneliness and lower socialisation over time. The more you talk to the AI, the less you talk to real people. And unlike real relationships, AI cannot grow with you, challenge you honestly, or be there for you in a physical crisis.

The concern is not that people feel comforted by chatbots. The concern is that chatbots are increasingly replacing the effort of building real connections. Real relationships are harder. They require vulnerability that is not always immediately rewarded. AI always rewards it immediately. That asymmetry matters.

| MORE AI COVERAGE ON TECHSUNNEWS.COM

→ What Is Agentic AI? The Biggest AI Trend of 2026 Explained Simply AI is moving beyond answering questions. Here is what comes next. → ChatGPT vs Gemini vs Claude — Which AI Is Actually Best in 2026? An honest comparison of the three biggest AI chatbots right now. → China Rules: Firing Workers Because of AI Is Illegal How courts are starting to push back on what AI can and cannot do. |

WHAT DO YOU THINK? HAVE YOU EVER FELT GENUINELY COMFORTED BY AN AI CHATBOT — OR DOES THE WHOLE IDEA FEEL STRANGE TO YOU? DROP YOUR HONEST ANSWER IN THE COMMENTS. NO JUDGEMENT. 👇

[AD UNIT 3]

Frequently Asked Questions

Why do people get emotionally attached to AI chatbots?

AI chatbots respond in emotionally attuned ways, remember past conversations and never show impatience or judgment. Psychologists call the resulting attachment anthropomorphism — we project human qualities onto things that behave like humans. Because AI mirrors our emotional tone and always validates us, it triggers the same brain responses as human connection. For lonely people or those without access to support, this can feel genuinely meaningful.

Is emotional attachment to AI chatbots dangerous?

It depends on the person and the extent of the attachment. Research shows that heavy chatbot use correlates with higher loneliness and lower socialisation over time. Drexel University found patterns matching all six components of behavioural addiction in teenagers using Character.AI. However, Stanford research also found that 3% of lonely Replika users said the chatbot temporarily halted suicidal thoughts. The picture is genuinely mixed.

Which AI chatbots are most associated with emotional attachment?

Apps specifically designed for companionship — Replika, Character.AI and Chai — show the highest rates of emotional attachment. Character.AI has 20 million monthly users, more than half under 24. General-purpose chatbots like ChatGPT and Claude are also increasingly used for emotional support, particularly among younger users.

SOURCES — 8 verified portals

1. Psychology Today — The Emotional Implications of the AI Risk Report 2026 (Feb 2026)

2. Rappler / Ipsos BVA — Young Europeans turn to AI chatbots for emotional support (May 5, 2026)

3. Drexel University — Teens Are Becoming Concerned About Their Attachment to AI Chatbots (April 2026)

5. Brookings Institution — What happens when AI chatbots replace real human connection (Oct 2025)

6. APA Monitor — AI chatbots and digital companions are reshaping emotional connection (Jan–Feb 2026)

7. OpenAI + MIT — How AI and Human Behaviors Shape Psychosocial Effects of Chatbot Use (March 2025)

8. techsunnews.com — What Is Agentic AI? The Biggest AI Trend of 2026 Explained

| DISCLAIMER: This article is based on 8 verified sources as of May 2026. If you or someone you know is struggling with mental health, loneliness or emotional dependency, please consider speaking with a qualified mental health professional. AI chatbots are not a substitute for professional care. |